Well, first post in english. Let’s see how it works.

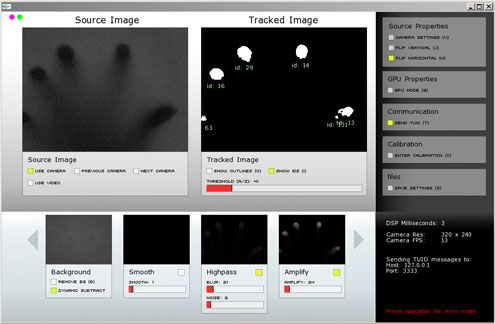

David (called “the mighty Brain” below) and me (called “the clumsy Hands” below) tried, and actually managed, to build our own multitouch screen using FTIR technology. We obtained most of the information to get started on Nuigroup. This is a great community and we wouldn’t have managed without them.Â

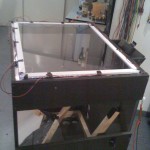

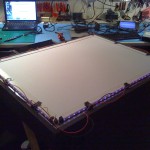

For our first try, we wanted to stay low budget. We repurposed our old tables to build the main box, tried different silicone-based options for the compliant surface, opted for some rosco projection screen, used some common webcams for the tracking (PS3 eye cam) and finally bought a relatively cheap DLP projector.

Our aim was to make a quick and dirty Multi(please)Touch from scratch, and learn by making experiments. We now plan to build a nicer V2.

The Hand knows how to handle the work when it’s about getting something done.

The Brain knows where is information and how to write code that work.

We plan to post some articles in the coming weeks to discuss the different aspects of the whole process.

Finally, here are some pics.